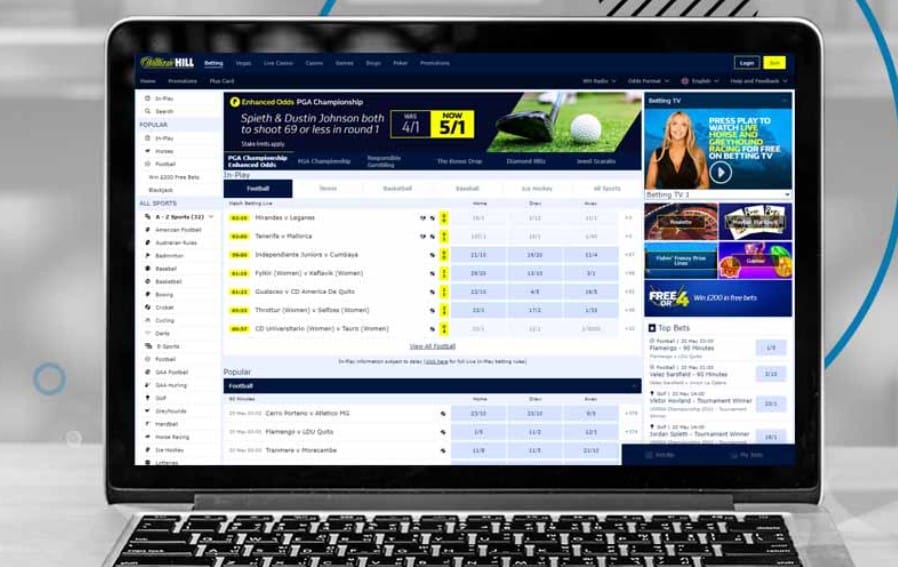

Spor bahislerinin heyecanı bilginizle birlikte para kazandırmaya devam ediyor. Ama canlı bahis sitesi seçiminin önemiyle yüksek orandan kazançlar daha fazla olacaktır. Canlı bahis siteleri ülkemizde onlarca sitenin hizmet verdiğini gösteriyor. İçlerinde yasal bahis sağlayanlarda kaçak bahis hizmeti verenlerin de olduğunu biliyoruz. Bahis bonusu, yüksek oran ve özellikle cash out faydasıyla illegal bahis siteleri daha iyi bir bahis deneyimi yaşatıyor.

Canlı Bahis Siteleri

- Mobilbahis

- Süperbahis

- Grandpashabet

- Bets10

- Betboo

- Süperbahis

- Casinomaxi

Seçeceğiniz canlı bahislerin yapıldığı adresler para kazanma faktöründe belirgin oranda avantajlar sağlarlar. Canlı bahis siteleri oyunlarına ve kayıt işlemlerine http://www.elculturalsanmartin.org/ adresi üzerindne giriş yapılmaktadır. Kaliteli bir yerin seçilmesi yüksek oranlar ve sağlam bahis bonusları ile canlı bahiste kazanma şansı vermektedir. Canlı bahis siteleri ülkede birçok seçenek barındırırken yasal siteler ve burada kaçak bahis siteleri seçenekleri var. Seçimler 2023 yılında daha çok kaçak bahis olduğundan canlı bahislerde fazlaca buralarda yapılmaktadır.

Avantaj faktörü olarak canlı bahis bonusları ve sağladıkları çok iyi bahis oranları ile canlı bahisleri keyifli ve kazançlı kılıyorlar. Günümüzde canlı bahis alınmadan önce maçlarda izlendiğinden maç izle tv bahis siteleri ile de sizlere maçları izletiyorlar. Biliyorsunuz ki canlıda maçların izlenmesi maçı bilme oranınızı artıracaktır. Kupon bilme istatistiğinizi de yukarı çekecektir. Paralı kanalları bile ücretsiz yayınladıklarından birçok lige sahipler. Kumar siteleri oyunları ve kazandıran bahis oranları için https://kervansarayhotel.com/ adresinden sitelere girilir.

Canlı bahis giriş adresleri size birçok yelpazede kaliteli kaçak bahis sitesi sunmaktadır. İçlerinde lisanslı olanları bizzat önermekteyiz. Tercihen sayfa içinde sunulan bütün canlı bahis siteleri eski ve burada güvenilirliği olanlardır. Çok uzun yıllar canlıda bahis oynattıklarından güvene dayalı bir bahis hizmeti özellikle alınacaktır.

Canlı Bahis Siteleri Bonusları

Sitelerden alacağınız bonuslar kazanca direkt olarak iyi yönde etki edecektir. İlk olarak ana para güvende kalırken bonusla kazanma yoluna gidilmektedir. En güvenilir canlı bahis siteleri bonusları alınması her para yatırma sonrası büyük bir avantajdır. Çoğunlukla da alınan bonuslar sayesinde kazancın yaratılma olasılığı iyiye gitmektedir. Kaliteli bonuslardan seçmeniz gerekenleri ve sitelerin sunduğu onlarca bonusu sizler için açıkladık. Bedava bonus veren canlı bahis siteleri en kazançlı bahis siteleri arasındadır.

Kayıt Bonusu: Yeni bir üyelikte %100 oranında alınabilen 1000 TL ile 5000 TL arası bir bahis bonusu seçimidir. Bir sefer verilecektir.

Çevrimsiz Canlı Bahis Bonusu: %15 oranında para yatırma sonrası alınabilen hiç çevrim şartı içermeyen bir bonustur. Yatırım miktarına göre verilmektedir. 1000 TL’lik yatırımda 150 TL verilecektir.

Çevrimli Canlı Bahis Bonusu: Siteler %20 oranında ufak bir çevrimle yatırım sonrası çevrimli bonus verebilirler. %5 ekstrası ile daha yüksek bir bonus alımı sağlanmaktadır.

Kayıp Bonusu: Şanssız giden bahislerin ardından %25 oranında paranızı iade aldığınız bonus olmaktadır. Hiçbir bonus almayanlar alabilirler.

Deneme Bonusu: Üyelik sonrası yeni üye olanlar canlı bahiste 100 TL’ye kadar bedava bahis alma şansı elde ederler. Canlı destekten talep edilince verilecektir.

Free Bet: Teknik olarak canlı deneme bonusuyla aynı özelliklere sahip ve bazı sitelerde daha yüksek miktarda verilebilmektedir. Bedava bahis bonusu olarak biliniyor.

Güvenilir bahis siteleri yukarıdaki yer alan bonusları oyuncuları için sunarlar. Tercihler yatırım yapıldıktan sonra canlı destek ekibine bildirilmelidir. Seçiminize göre bonuslarım alanında size gönderilen bonusu aktif ettikten sonra kullanabilmektesiniz.

Bahis Oyunları

Her ülkede bahis oyunlarından bazıları ön plana çıkmaktadır. Türkiye’de ise genel olarak spor bahislerinin oynanma oranı tüm bahislerden fazladır. Futbol ve basketbol bahislerinin ülkede yaygın olduğu da bilinmektedir. Bahis oyunları içinde size iyi bir para kazanma olasılığı veren elbette çeşitler var. Bültende güncel maç olmadığında da kullanabileceğiniz sanal bahisler vardır. Buradaki tüm bahisleri karma olarak bir kombine kupon yapabilirsiniz.

- Spor Bahisleri

- E-Spor Bahisleri

- Sanal Spor Bahisleri

Bahislerde 2022 yılında en çok bahislerin alındığı kategoriler olarak bilinmektedir. Daha çok futbol, basketbol olsa da son yıllarda masa tenisi ve voleybol maçları da çokça oynanmaktadır. Ayrıca e-spor içinde size Lol, Counter strike bahisleri ve dota2 bahisleri gibi bahisler sunulmaktadır. Burada Valorant bahisleri vb. seçenekleri de görmeniz mümkün.

Favori bilgisayar oyunları da online canlı casino siteleri içinde bahislere açıktır. Biliyoruz ki bu oyunları sevenlerde burada bilgilerini değerlendirebilirler. Diğer bahis türleri slot ve canlı casinoya gireceğinden spor bahis sitelerinde yer vermedik. Ama biliyorsunuz ki bahis sitelerinin çoğunda bu kategorilerde var.

Bahis Siteleri Üyelik ve Kayıt

Yeni bir üyelikle kaydolacağınız bahis sitelerinde form alanı doldurulacaktır. Üye olma butonuna basıldıktan sonra bahis şirketleri size bir form sunarlar. Burada sıklıkla kişisel bilgileri içeren bazı sorular sorulmaktadır. Lisanslı bahis siteleri üyelik ve kayıt esnasında verilen bilgiler doğru ve kullanıcıya ait olmalıdır. Para çekme ve para yatırma işlemlerinde bu bilgileri kontrol ederler. Bu yüzden özellikle doğru doldurulmalıdır. Neler istiyorlar?

- T.C Kimlik Numarası

- E-Posta

- Telefon Numarası

- Ad Soyad

- Doğum Tarihi

- Cinsiyet

- Kullanıcı adı ve şifre belirleme

Yukarıdaki boşluklar kaydolma ekranında düzenlenirken güncel doğru bilgiler girilmelidir. Kayıt olma işlemleri canlı bahis siteleri http://britishjewishstudies.org/ adresinden giriş yapılarak kolayca gerçekleştirilebilmektedir. Kontroller genelde finansal işlemlerde sağlanacaktır. Buraya yazılan bilgilerin doğru olması para çekme sürenizi çok hızlı bir hale getirecektir. Bu yüzden özellikle verilen her bir bilgi güncel ve doğru olmalıdır. Aynı zamanda bu bilgileri onlar üçüncü taraflarla paylaşmadan koruyorlar.

Not olarak şunu belirtmekte fayda var. Her bahis sitesine bir sefer üye olunmalı ve bir hesap kullanılmalıdır. Bahis siteleri mutlaka buradaki duruma dikkat ediyorlar. Birden fazla hesap açma ve kullanma gibi eylemler kural dışı sayılmaktadır. Bu yüzden genel kural ve şartlar ilkelerine sitelerde uymanız önemlidir.

Bahis Siteleri Para Yatırma ve Çekme

Paranızı yatırma ve paranızı çekme adımlarında bahis siteleri birçok metot ve yöntemi size sunabilirler. Yasal bahiste olduğu gibi tekil bir para yatırma seçeneği söz konusu değildir. Bahis siteleri para yatırma ve çekme aşamasında sunabildiklerini anlatırken metot seçimleri de önerdik. Özellikle bazı yöntemlerin bonus alımı ve para çekme süresi çok hızlı bu yüzden tercih edilirler. Para yatırma nasıl yapılır?

- Para yatırma butonuyla yöntemleri listeleyin.

- Yöntem tercihi yapın. (Papara, Payfix, Jeton Cüzdan, Ecopayz vb.)

- Ad Soyad, Para yatırma miktarı ekrana girilecektir.

- Bahis şirketi ekranda size bir hesap numarasını iletmektedir.

- Belirtilen hesaba yazılı para miktarı transfer edilecektir.

- Paranız 1-2 dakika süresince bahis hesabınıza geçmektedir.

- Para çekme butonuyla listeyi görüntüleyin.

- Yöntem seçimini yaparak ilerleyin.

- Para çekme miktarı, T.C Kimlik numarası ve Ad Soyad bilgiler girilecektir.

- Onayla butonuyla paranız hesabınıza maksimum 30 dakika gönderilmektedir.

Yöntemlerde Papara, Banka havalesi, Pep, Astropay, Bitcoin, Cepbank, QR kod vb. seçeneklerde var. Buradan size hitap eden bir transfer metodunu seçebilirsiniz.

Güvenilir Bahis Siteleri Giriş

Canlı iddaa sitelerindeki güncel adresler değiştikçe giriş adresleri aranmaktadır. Güvenilir bağlantı sunan bir mekanizma üzerinden link alınmalıdır. İnternette birçok bahis sitesi için link paylaşan yerlerde sınırlı sayıda güvenli yer var. Güvenilir bahis siteleri giriş adresi bu sebeple doğrudan bu sayfadaki linkler üzerinden alınmalıdır. Ya da harici bir seçimde yabancı bahis siteleri sosyal medya hesapları üzerinden de alabilmektesiniz. Yine burada fake sosyal medya hesaplarına dikkat edilmelidir.

Kaçak bahis hizmet verildiğinden birçok site güncel adresini hafta içinde günceller. Böyle durumlarda sitelere ulaşma içinde sayfadaki linkleri 7/24 kullanabilirsiniz. Özellikle otomatik güncellendiğinden her güncelleme de giriş adresleri güncel olacaktır. Fake bir siteye girilmemesi için buradan linkleri temin etmenizi önermekteyiz.

Sahte sitelerde canlı casino bölümü ve rasgele kullanıcı adı ve şifre yazıldığında sisteme girmektedir. Ayrıca basitçe düzenlenen bir canlı destek alanı olduğundan da anlaşılacaktır. Bu tarz art niyetli sahte sitelere girilmemesi için google üzerindeki rasgele sayfalardan link alınmamalıdır. En güncel ve güvenilir giriş adresleri için linkleri buradan alabilirsiniz.

Bonus Veren Bahis Siteleri

Ülkemizde kaçak bahis oynatan her sitenin kendi bonusları vardır. Gelirlerine göre onlar bu bonus yüzdelerini ve miktarları ayarlarlar. Bazıları çok büyük siteler olduğundan bahis bonusları daha fazladır. Güvenilir bahis siteleri içindeki bu büyük seçenekler çok eskiden beri hizmet verirler. Bu yüzden bonusları bir nebze daha fazla olacaktır. İyi bir bonus alabileceğiniz siteleri link verdiğimiz bahis sitelerinden karşılayabilirsiniz. Kayıt bonusları ve deneme bonusu için iyi bir fırsat veriyorlar.

Yasal bahiste bonus olmadığından tüm seçenekler illegal bahis siteleri olacaktır. Üyelikte bonus avantajını ve sonrasında yine para yatırma bonuslarını onlar sunuyorlar. Aradığınız bonus türüne göre onlarca seçeneğiniz var. Üst limitten bonus veren bahis siteleri daha iyi bir para kazanma deneyimi yaşatabilirler. Bonusların buradaki kullanım miktarı fazla olunca fazla kupon ve bahis alma şansınız olacağını belirtelim.

Yasal Bahis Siteleri

İddaanın Türkiye’deki tek yasal bahis oynatıcısı olduğunu biliyoruz. Sanal ortamda 6 bahis sitesi ile de online canlı bahis siteleri içinde hizmet vermektedir. Yasal bahis siteleri sayısı az ve bu siteler bonus ve oranlarda geri kalıyorlar. Eskiye oranla oynanma oranları da bir hayli düşmüştür. Ama hala yasal bahis oynayanlarda var. Yasal bahiste seçenekler Birebin, Nesine, Oley, Tuttur, Misli ve Bilyoner siteleri olarak bilinmektedir.

Buradaki seçeneklerden en çok kullanılan iki seçim nesine ve bilyoner olarak bilinmektedir. Mobil bahis uygulamaları daha iyi olduğundan diğerlerinden fazla kullanılıyorlar. Artık herkes online içinde bahis aldığından bu uygulamalar fark yaratmaktadır. Tabi ki kaçak bahis sitelerine göre geri kalsalar da yasal bahis siteleri içinde farkları var.

Bilinmeyen Bahis Siteleri

Yeni açılan bahis sitelerini takip edenler canlı bahis siteleri içinde yeni kurulan siteleri tercih edebilirler. Bonus olarak bir nebze fazla avantaj verdiklerinden kayıt bonusları avantajlı olabilmektedir. Bilinmeyen bahis siteleri elbette seçilirken lisans ve ödeme performansı gibi durumlar kontrol edilmelidir. Çünkü eski siteler para ödeme güvencesi verse de yeni olanlarda bir bilginiz olmayacaktır. Bu yüzden sitelerin güvenilirliğinden emin olunmalıdır.

Her yıl bahis şirketleri içinde online hizmet veren yeni siteler giriyorlar. Seçim yaparken mutlaka lisanslı olana gidilmelidir. Curaçao, MGA veya Antillephone gibi bir lisansa sahip olmalılar. Asla bilinmeyen sitelerde lisanssız olanlara kaydolma işlemi yapmayın.

Yeni Bahis Siteleri

Türkiye’de yeni bir bahis deneyimi yaşatan onlarca bahis sitesi var. Betconstruct, betsson ve pronet gibi alt yapılarla online bahis hizmeti veriyorlar. Bonuslu canlı bahis siteleri içindeki yeni bahis sitelerine girerken lisanslılar tercih edilmelidir. Diğer bir kontrol edilmesi gereken alan ise sitenin ödeme performansı ve oyuncu yorumları olacaktır. Yeni bahis siteleri daha çok verdikleri bonus sebebiyle seçilmektedir.

Sizler de kayıt bonusu ve nakit para olmadan bahis alma için deneme bonusu gibi ayrıcalıklara bu sitelerden sahip olabilirsiniz. Çoğunlukla güvenilirliği olanlarında olduğunu belirtelim. En iyi canlı bahis siteleri giriş adresleri içinde sunulan siteler arasında varlar. Sizlerde editörlerin seçimlerinden oluşan bu sitelerde özellikle yeni bir bahis deneyimi yaşayabilirsiniz.